Objective

I want to give first my public website a more professional look and feel.

Next, I want to better organize my documentation and manage my knowledge.

I want to automate the management of my infrastructure via scripts.

I also want to start by creating an application development and deployment environment on my home server.

For now, I only want to provide a source code Git repository manager, a build server, and a binary registry as central tools.

Result

1 - Upgrade public website to a professional look and feel

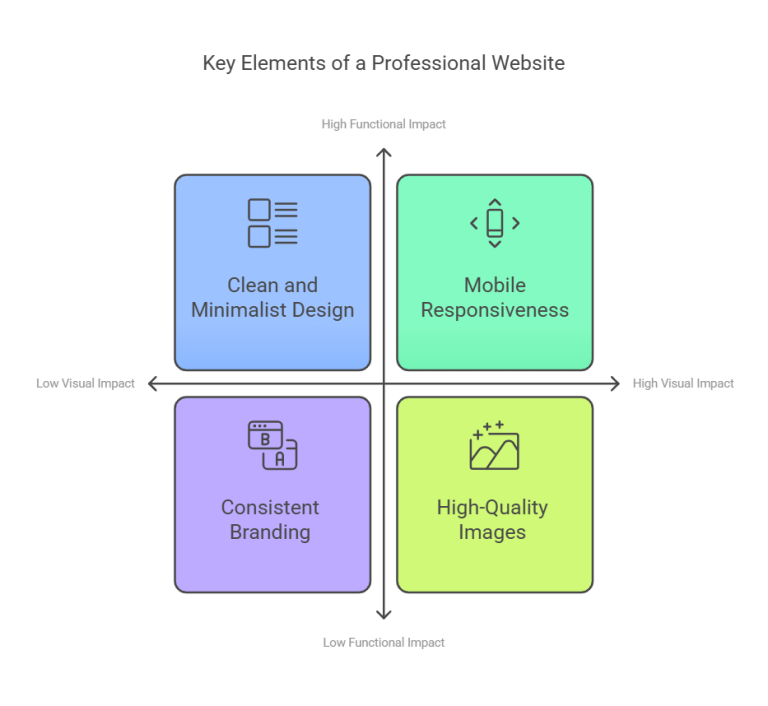

I started by examining and comparing the layouts of many existing websites on the internet.

I also read numerous reviews discussing and comparing website layouts,

and learned about the presentation standards used for public-facing websites on PCs and mobile devices.

In my opinion, the two most important qualities for public-facing layouts are:

- easy-to-use functionality

- visual appeal

I opted for a layout similar to that of the VRT NWS

website.

This layout has a fresh, uncluttered look and is also easy to use on both desktop and mobile devices.

Next, I searched for information to implement this look and feel.

I still had a lot to learn, especially in the area of visual appeal:

- more advanced use of CSS and Tailwind CSS:

responsive positioning and sizing of elements,

use of absolute, relative, and flexbox layouts,

dynamic styling with calc() in CSS,

use of clipping, overflow, and aspect ratio for images and videos - access to royalty-free videos, photos, illustrations, and backgrounds:

I now have a list of free sites that collect these useful elements - better organization options for Hugo layouts and shortcodes

(this made maintaining my site much easier)

Because many visitors often use “https://robertthecoder.org” as the URL instead of “https://www.robertthecoder.org,”

I’ve added this shorter name as CNAME record on Cloudflare (as DNS Registrar).

From now on, both names will point to the same URL location.

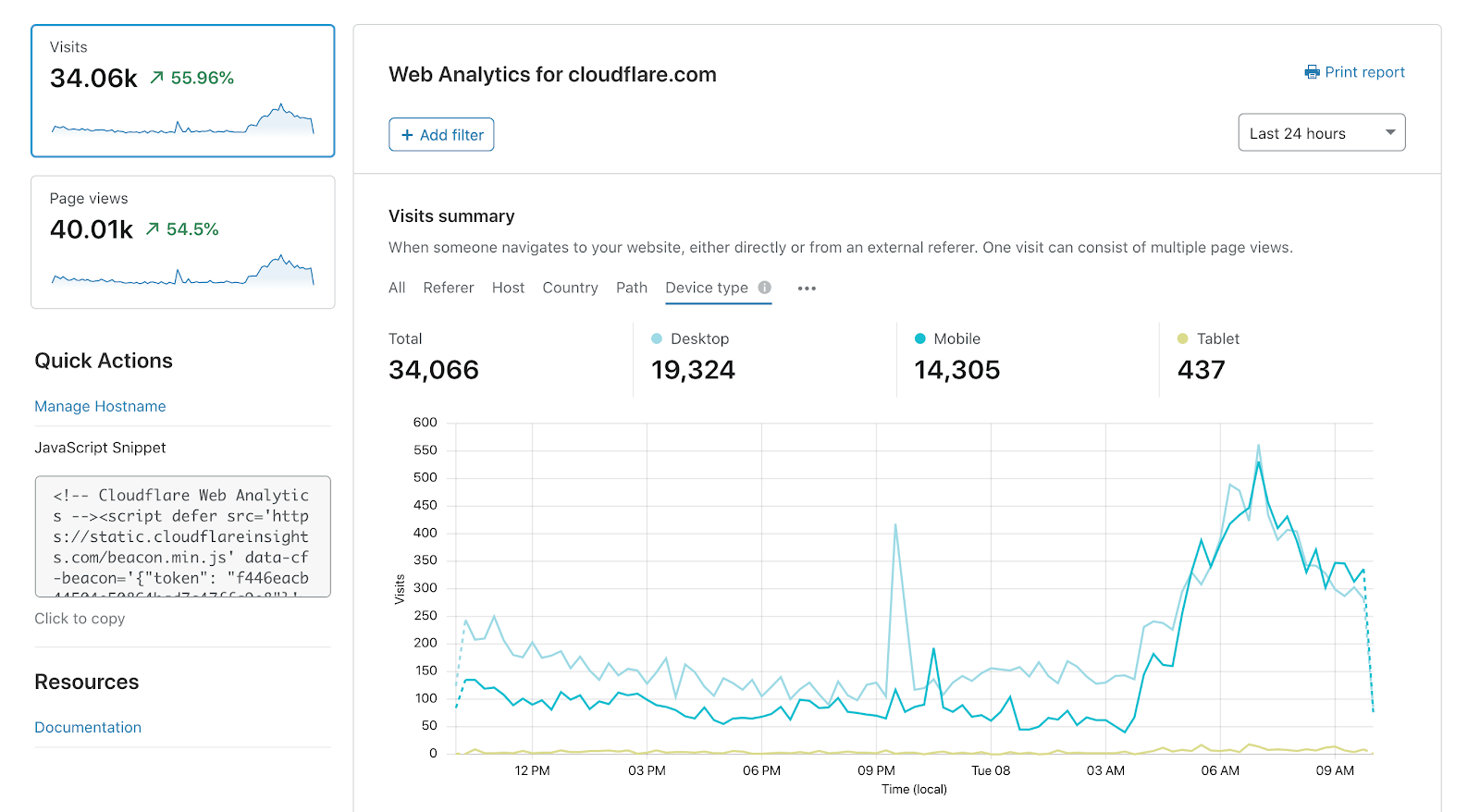

Finally, I also explored available services for analyzing and tracking website traffic.

“Google Analytics” or “Umami” are often chosen as free solutions.

Because I already hosted my website on Cloudflare,

I ultimately chose Cloudflare’s free “Web Analytics” service.

After configuring this service, I can now easily track the following metrics via a dashboard:

page view history, device OS, and browser used by visitors, etc.

2 - Simplify knowledge and documentation management

While carrying out my computer projects, I learned a great deal about computer technology.

I found most of my information through Google search and YouTube videos.

I maintainzd all the references for good information sources and created my own documentation using Markdown files.

Because the volume of information is becoming very large and difficult to structure in files,

maintaining/expanding it and retrieving information has become very difficult.

Therefore, I sought a different approach with software support to simplify knowledge management and documentation creation.

For this, I brought all the information already available together in categories.

This took me a lot of time and effort.

After thorough research, I opted for the “Obsidian” software (versus Notion, Capacities).

The most commonly used standard approach by researchers is the “Zettelkasten” method.

However, developers and devops tend to use a more personalized approach with their own structure in Obsidian.

This knowledge management is completely new to me, and I choose to start with my own approach.

That’s why I created two Obsidian knowledge bases or “vaults” (abbreviated “OV”):

- “OV-ComputerTech”:

Contains general information about computer technology that I can share with others. - “OV-MyHomeLab”:

Contains specific information about my own software, computers, and network configurations that only I can access.

3 - Simplify the installation of server software

In previous projects, I manually installed Proxmox as OS on my Home server.

Afterward, I also installed the LXC container “nas-fileserver” and the virtual machine “LinuxMint” on this “proxmox01” node.

In this project, I want to install several new LXC’s with software installed

which will be used including for the development and deployment of custom applications:

“home-utilityserver”, “home-devdepserver”, “home-testappserver”, and “home-prodappserver”.

Later, I will also reorganize the existing “proxmox01”, “nas-fileserver”, and “LinuxMint”,

and rename them to “home-pve” and “home-backupserver”.

I’ll be using an agile approach in future posts to develop an IDP (Internal Developer Platform).

This will be done in small steps that add immediate value.

See Architecture of my home server

for the situation after this post.

Because this is very repetitive work, I was looking for a way to simplify and automate software installation.

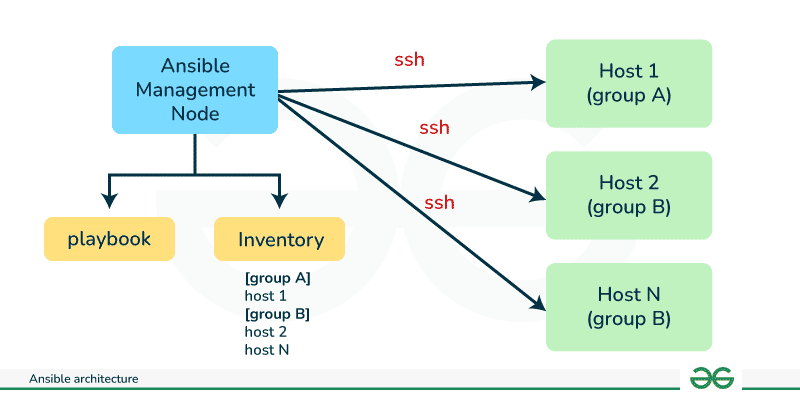

To this end, I first learned the syntax and capabilities of “Bash Scripting” and “Ansible”.

I also learned about “ansible-pull” and “ansible-playbook”, where the playbooks can be stored in a git repository.

I also studied the Proxmox commands (especially “pct”) and the “Proxmox VE Helper Scripts”.

After this study, I created bash scripts to create, delete, and clone an LXC server.

Because almost all LXC servers require the same settings and basic software,

I first implemented an LXC template, “templ-ubuntu”.

In “templ-ubuntu”, I installed the software “Screenfetch”, “Fresh”, “Curl”, “Docker”, “Stow”, “Git”, and “Zsh” via new bash install scripts.

I then used this template server to create all other LXC servers.

Why is the following software installed on all LXC servers?

- “Ubuntu Server”, “UFW” and “SSH”:

I use the Ubuntu OS as the basis for all my LXC servers, and UFW and SSH is already installed automatically;

I also created an admin user “myadmin” with sudo and ssh privileges for remote access;

UFW (Uncomplicated Firewall) is the default firewall management tool for Debian-based Linux systems,

designed to simplify iptables or nftables configuration. - “Screenfetch”, “Fresh” and “Zsh”:

Screenfetch is a lightweight system info tool that displays OS, hardware, and software details in the terminal;

Fresh is a lightweight, fast and powerful Terminal Text Editor for Linux, macOS and Windows (better than Nano);

Zsh is a shell and programming language for the Unix/Linux command line (compatible with the Bourne/Bash shell);

Zsh is great for interactive use, with advanced autocomplete features, built-in history manipulation, etc. - “cURL” and “Docker”:

Curl is a command-line tool for downloading or uploading data using various protocols;

Docker is used to download software packages and run them in isolation as containers;

Both software are often used to install other software (alongside apt as a package manager). - “Git” and “GNU Stow”:

Git is a distributed version control system and can be used to distribute source code;

Stow is a symbolic link manager that ensures that different software packages,

located in separate folders on the file system, appear to be installed in the same location;

Both software programs are often used to distribute and synchronize software settings and data.

Next, I used this template lxc to create the “home-devdepserver” server.

I will use and expand this server later for the development and deployment of my own applications.

I then also installed the “Ansible” software on this lxc via scripts.

4 - Simplify referencing services and applications in home network

First, I wanted to give servers in my home network a logical hostname so I could easily refer to them.

Because I didn’t want to host my own DNS server, I used subdomains of my registered public domain name.

For my registered domain name “robertthecoder.org,” I linked subdomains as logical hostnames to private IP addresses.

Since these are private IP addresses, they are only accessible within my local network.

To easily distinguish these subdomains for private IP addresses from other subdomains,

I always prefix them with “home-”.

So I created a new A record on Cloudflare (as DNS Registrar) with name “home-devdepserver.robertthecoder.org”,

and private IPv4 address for my “home-devdepserver” server.

To easily access all my services and applications, I created my own home dashboard/portal.

This web dashboard contains widgets and links that have been organized into groups.

For the implementation, I chose the popular and free “Homarr” software

(versus Homer, Heimdall, Glance, Homepage, Dashy).

I also gave this server the DNS name “home-utilityserver.robertthecoder.org”,

and added an Inbound rule in the host firewall to allow access to homarr.

Most services and applications require a password for access.

To manage these passwords, I’ve been using the free version of “Bitwarden” (vs. “Passbolt”, “LastPass”).

These tools are called online password managers because a network connection is necessary for use.

There exist also offline GUI password managers (e.g. “KeePass”) and offline console password managers (e.g. “password-store”).

I also installed KeePassXC on my Windows/Linux machines and Keepass2Android on my Android smartphone.

I created the “KD-MySecrets” database on my local Windows PC.

Next, I manually copied this “KD-MySecrets” database file to my Linux desktop and Android smartphone as well.

I then learned how to use this software on my Windows PC, Linux laptop, and Android smartphone.

5 - Server tools for developing and deploying web apps

My Windows development PC already had “Git,” “VSCode” with “Git Graph,” and “MSEdit” installed.

I also installed “Docker,” “Podman,” and “Portainer” on my Windows PC.

In previous projects, I’ve used the public github.com site for the deployment of my website.

For the development and deployment of my own applications, I also want to use the Git repository manager.

Because I want to host this Git repository manager myself on my home server,

I compared popular software: “GitLab - Community Edition” and “Gitea - Free Edition”.

I first learned the basics of GitLab on the public gitlab.com site.

There, I learned not only the basic Git commands but also the additional GitLab functionality.

A simple HelloWorld application was created and saved using a GitLab CI pipeline.

A container image was created for this example application using Docker,

which was then stored in the Gitlab Container Registry.

Because this GitLab software is resource-intensive and offers too extensive functionality for my needs,

I ultimately opted for the “Gitea” software.

Gitea is a lightweight GitHub alternative, specifically designed for self-hosting and includes:

- “Gitea” (vs. “GitLab”, “GitHub”) as a source code repository

- “Gitea Actions” (“GitHub Actions” alternative, vs. “Gitlab CI & Runners”, “Jenkins”) as a build server

- “Gitea Package Registry” (vs. “Gitlab Registry”, “Nexus”) as a registry for binaries and OCI images

I now also installed the latest free version of Gitea.

On the local Gitea server, I first created three “organizations”:

“robertthecoder-app”, “robertthecoder-util”, and “robertthecoder-example”.

From now on, repositories will always be created in one of these “organizations”.

So in this section I installed the Gitea software alongside Ansible on “home-devdepserver”.

In the previous section, I created folders on my local Windows machine:

dotfiles folder for Stow, “KD-MySecrets” database for KeePass, vaults “OV-ComputerTech” and “OV-MyHomeLab” for Obsidian.

To make these folders accessible everywhere and to maintain backups,

I created git repositories for them in Gitea.

New knowledge will henceforth be stored in these Obsidian vaults,

and existing information will gradually be incorporated into these vaults as well.

6 - Installation JetKVM

The IP-based KVM from JetKVM was only now delivered (see blog post from October 1, 2025).

This KVM was connected to my home server and tested.

I will use this JetKVM later to completely reinstall the existing “proxmox01” and “nas-fileserver”

as “home-pve"and “home-backupserver” using script files.